Resource-Efficient Collaborative Edge Transformer Inference with Hybrid Model Parallelism

Shengyuan Ye,

Bei Ouyang,

Jiangsu Du,

Liekang Zeng,

Tianyi Qian,

Wenzhong Ou,

Xiaowen Chu,

Deke Guo,

Yutong Lu,

Xu Chen

May 2025

Abstract

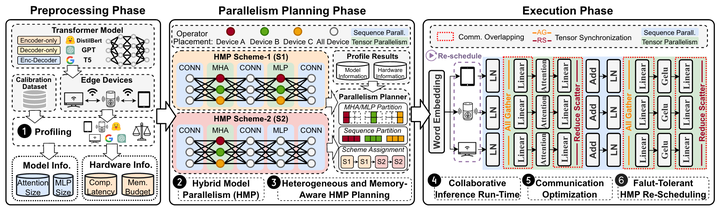

Transformer-based models have unlocked a plethora of powerful intelligent applications at the edge, such as voice assistant in smart home. Traditional deployment approaches offload the inference workloads to the remote cloud server, which would induce substantial pressure on the backbone network as well as raise users’ privacy concerns. To address that, in-situ inference has been recently recognized for edge intelligence, but it still confronts significant challenges stemming from the conflict between intensive workloads and limited on-device computing resources. In this paper, we leverage our observation that many edge environments usually comprise a rich set of accompanying trusted edge devices with idle resources and propose Galaxy+, a collaborative edge AI system that breaks the resource walls across heterogeneous edge devices for efficient Transformer inference acceleration. Galaxy+ introduces a novel hybrid model parallelism to orchestrate collaborative inference, along with a heterogeneity and memory-aware parallelism planning for fully exploiting the resource potential. To mitigate the impact of tensor synchronizations on inference latency under bandwidthconstrained edge environments, Galaxy+ devises a tile-based fine-grained overlapping of communication and computation. Furthermore, a fault-tolerant re-scheduling mechanism is developed to address device-level resource dynamics, ensuring stable and low-latency inference. Extensive evaluation based on prototype implementation demonstrates that Galaxy+ remarkably outperforms state-of-the-art approaches under various edge environment setups, achieving a 1.2× to 4.24× end-to-end latency reduction. Besides, Galaxy+ can adapt to device-level resource dynamics, swiftly rescheduling and restoring inference in the presence of unexpected straggler devices.

Publication

IEEE Transactions on Mobile Computing (IEEE TMC), 2025.

Shengyuan Ye

Ph.D. student at SMCLab

He is a Ph.D. student at School of Computer Science and Engineering, Sun Yat-sen University. His research interests include Resource-efficient AI Systems and Applications with Mobile AI.

Jiangsu Du

Assistant Professor, Sun Yat-sen University

He obtained Ph.D. degree at School of Computer Science and Engineering, Sun Yat-sen University. He is now a Assistant Professor at the Sun Yat-sen University, working on High Performance Computing, and Distributed Artificial Intelligence System.

Liekang Zeng

Ph.D., Sun Yat-sen University

He obtained Ph.D. degree at School of Computer Science and Engineering, Sun Yat-sen University. His research interest lies in building edge intelligence systems with real-time responsiveness, systematic resource efficiency, and theoretical performance guarantee.

Xiaowen Chu

Professor, HKUST(GZ)

Acting Head, Data Science and Analytics Thrust

Dr. Chu is currently a Professor at the Data Science and Analytics Thrust, Information Hub of HKUST(GZ), and an Affiliate Professor in the Department of Computer Science and Engineering, HKUST. His current research interests include GPU Computing, Distributed Machine Learning, Cloud Computing, and Wireless Networks. He is especially interested in the modelling, parallel algorithm design, application optimization, and energy efficiency of GPU computing.

Xu Chen

Professor and Assistant Dean, Sun Yat-sen University

Director, Institute of Advanced Networking & Computing Systems

Xu Chen is a Full Professor with Sun Yat-sen University, Director of Institute of Advanced Networking and Computing Systems (IANCS), and the Vice Director of National Engineering Research Laboratory of Digital Homes. His research interest includes edge computing and cloud computing, federated learning, cloud-native intelligent robots, distributed artificial intelligence, intelligent big data analysis, and computing power network.

Galaxy+ Overview

Galaxy+ Overview